Versión en Español / / Download PDF

Hand in hand with the development of information technologies (wireless technologies, social media, big data tools, machine-learning algorithms, etc.), “data” seem to become the new “oil” of contemporary capitalism (The Economist 2017). This phenomenon has resulted in new categories to explain the production and accumulation of value in capitalism, such as those of “attention economy” (Davenport & Beck 2001; Celis Bueno 2017), “surveillance capitalism” (Zuboff 2020) or “computational capitalism” (Beller 2018). What all of them have in common is the central role played by “data mining” (“data extractivism”), hence positioning information as a key commodity in contemporary capitalism.

In relation to the above, one of the terms that has gained particular popularity in recent years is that of “platform capitalism”, coined by Nick Srnicek (2018). According to this author, given the “prolonged fall in the profitability of manufacturing, capitalism turned to data as a way to sustain economic growth and vitality in a context of motionless in the productive sector”. The most prominent internet platforms (Google, Facebook, Amazon, Uber, AirBnB, etc.) must, therefore, be understood as part “of a larger economic history” and as “means of generating profitability” in the context of the falling rate of profit of industrial capitalism. In this way, Srnicek argues that such platforms would be inaugurating a “new accumulation regime”.

The expression “data extractivism” establishes an analogy between information management and the mining industry, defining data as a raw material that can be extracted, commercialized, refined, processed, and transformed into other commodities with added value. Platforms, for their part, emerge as a “vast infrastructure” necessary to “detect, record and analyse” data (Srnicek 2018). Finally, users appear as a “natural source of raw material”, that is, of data (Srnicek 2018).

The problem with this perspective is that it naturalizes our relationship with data, as if it were a source of pure information that precedes extraction operations. An important contribution to the denaturalization of this conception of data has been made by Verónica Gago and Sandro Mezzadra (2017) through their discussion on the need for an expanded concept of extractivism to think about contemporary capitalism. In the same vein, the objective of this text is to examine the link between “data extractivism” and capitalism’s valorisation process. Specifically, we will reflect on whether or not the new developments in machine-learning algorithms require a redefinition of Marx’s labour theory of value in order to think about contemporary capitalism. For that purpose, three positions that explore this question from different perspectives will be presented. In the first place, the thesis of Italian post-workerism on the crisis of the value theory in post-industrial capitalism is used to define data extractivism as a concrete form of “appropriation of the common”. In this section, the category of “social factory” (Negri) and the thesis of the “becoming rent of capital” (Vercellone) play a central role. Second, we examine the perspective of the so-called “critical theory of value”. According to it, in order to make a Marxist critique of contemporary capitalism Marx’s theory of value is essential. In this section, Ramin Ramtin’s thesis on the link between capitalism and automation will be presented to illustrate the impossibility of data to produce value. Third, an alternative to the previous opposition will be introduced. In order to do so, we redefine the valorisation cycle of capital by introducing the concept of information. This implies considering the following: a) the link between information and labour defined by Maurizio Lazzarato in his definition of “immaterial labour”; and b) how Gilbert Simondon’s concept of information can be used to reinforce Lazzarato’s thesis. This twofold consideration will allow us to redefine and historicize the link between human (living) labour and machines in Marx’s theory in order to explore the role of data extractivism in the valorisation process of contemporary capitalism.

Machine learning and data extractivism

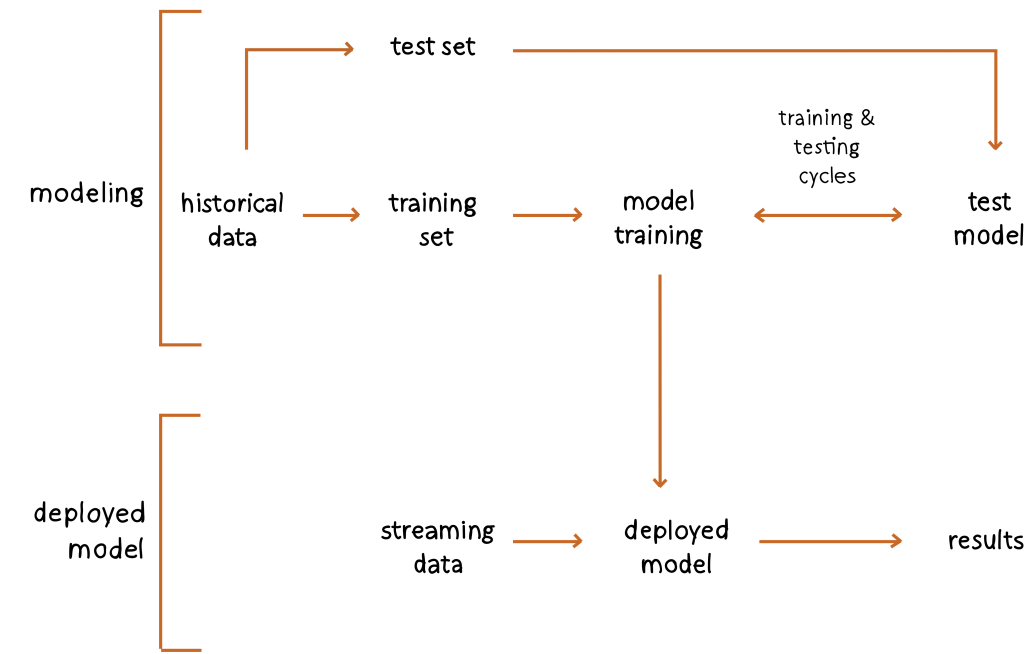

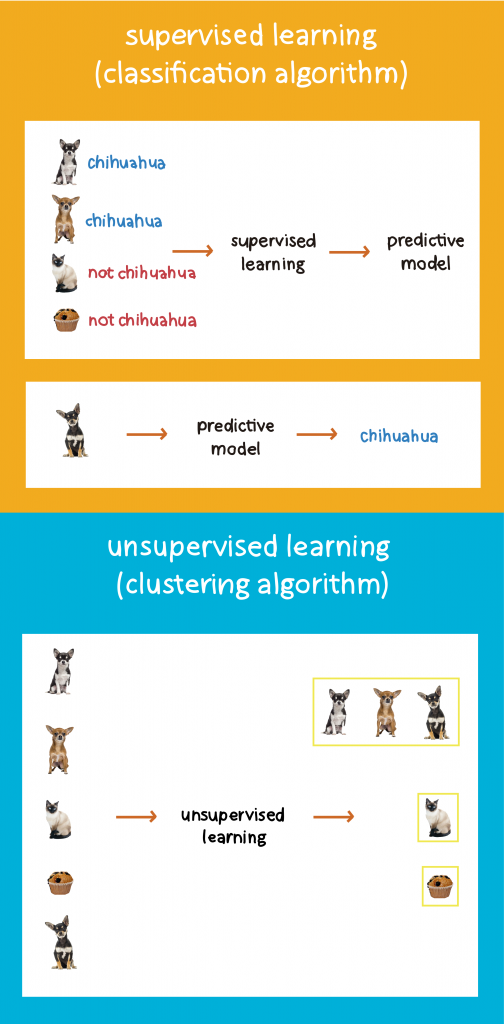

Machine learning refers to a particular type of programming in which an algorithm is not designed step by step by a human programmer (as in the case of rule-based systems) [Figure 1]. Rather, it is generated through a training process that can be either supervised or unsupervised [Figure 2]. In both cases, an optimization function is fed with a huge amount of data to extract certain patterns from it. The greater the amount of data, the greater the effectiveness of the algorithm and the complexity of the tasks to be executed. This is where “data extractivism” enters the scene: the recording of information by data capture platforms has allowed the generation of giant databases over time that have been later used for training machine-learning algorithms. In other words, the accumulation of data has been one of the necessary conditions for the development of machine learning (Crawford 2021).

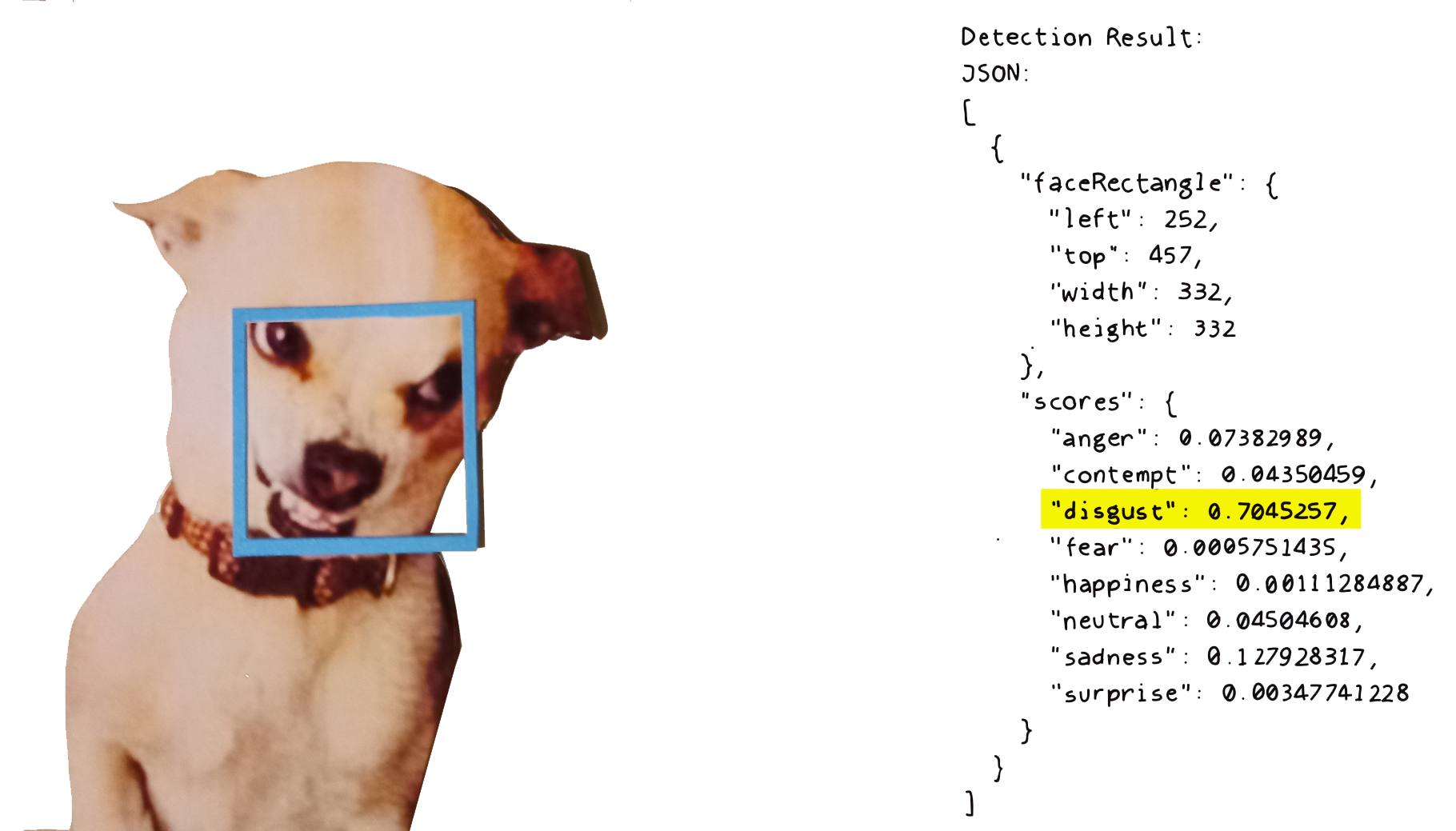

Recent developments in machine learning have allowed algorithms to achieve highly complex tasks that would be very difficult (or simply impossible) to be programmed step-by-step by a human being. At the same time, in many cases human agents do not understand how the already trained algorithms operate, ultimately transforming themselves into true “black boxes” to automate complex tasks. Furthermore, many scholars have suggested that this training process reifies already existing social structures (class, gender, race), presenting them as if they were “natural” patterns. This process has been called “machine bias”. “Black box” and “machine bias” are pivotal problems of machine learning which we have developed elsewhere (Celis & Schultz 2020).

What interests us here is that, thanks to a training process, machine learning algorithms make it possible to automate highly complex tasks. As mentioned, the larger the training data set, the greater the complexity of the automatable tasks and their effectiveness. Data extractivism is key at this point since it allows the generation of training sets. From this perspective, it is possible to establish a link between data extractivism, automation, and the consequent expulsion of human labour from the productive arena. This is the link between data extractivism, machine learning and capitalist valorisation that we would like to explore from three different theoretical perspectives in the following sections.

Preliminary Background

Before referring to these three perspectives, let us briefly mention some conceptual antecedents that can help us to understand current reflections on data extractivism within a broader history of a Marxist tradition that has tried to think about the role of information and communication in the process of capitalist valorisation.

In a famous and controversial article from 1977, Dallas W. Smythe defined communication as “the blind spot of Western Marxism”. For this Canadian author, Western Marxism had thought of communication only from the perspective of ideology, that is, as a suprastructural reflection of the material basis or, in the best of cases, as an ideological apparatus aimed at the reproduction of the relations of production. In no case, Smythe argues, had Marxism until then set itself the task of thinking of media as part of the cycle of valorisation of capital. Taking on this task, Smythe suggests that the commodity produced by media is not the message but the audience itself, a commodity that is then sold to advertising agencies. This audience-commodity is the result of a labour process in which the viewer actively participates. In other words, the audience is a commodity produced by the viewer’s labour, quantifiable in terms of their attention span.

Bill Livant and Sut Jhally (1986) exemplify Smythe’s argument with private free-to-air television (“free” but not State-funded). These channels invest a fixed capital in the production of their programming, which is then articulated with the labour of human attention of the audience (which constitutes the variable capital). The channel then sells that attention to advertising agencies for a sum greater than the fixed capital invested in the production of the programming, thus producing surplus value. In other words, part of the audience’s attention labour is remunerated with the programming consumed, while another part is not. The added value produced by the channel can be increased in absolute terms (increasing the proportion between programming and advertising space), but like the workday in Marx’s analysis, the absolute increase in attention time has a limit. For this reason, channels must seek the production of a relative surplus value, that is, not make the viewer see a greater amount of advertising but watch it more intensely. To this effect, the advertising message must be personalized. The personalization of the audience is the condition of possibility to transit from an absolute surplus value (formal subsumption of watching) towards a relative surplus value (real subsumption) within the attention economy (Celis Bueno 2017).

Authors such as Christian Fuchs (2015) and Mike Lee (2011) have applied the arguments of Smythe and Livant and Jhally to explain the capitalist valorisation process on sites like Google and Facebook, a process that arises mainly from the sale of highly personalized advertising space. In traditional television, the audience is personalized through very limited measurement systems (“people meter”). In the case of the internet, on the other hand, the personalization of the audience is achieved through a series of tools that generate highly complex digital profiles. Data extraction operates as the information recording and processing mechanism that enables this audience customization. Digital platforms, meanwhile, are the infrastructure behind this data capture. An important part of this business model depends on the ability of platforms to capture and process data in order to generate user profiles that allow it to market highly personalized human attention. In other words, thanks to data extraction, advertising platforms make it possible to increase the production of relative surplus value of attention much more efficiently than television. This means that the user’s labour is double. On the one hand, it implies the necessary attention to produce the audience-commodity that is then marketed by the platform. On the other hand, users’ online activities generate data that is then used to personalize our attention and thus make us “watch more intensely”.

A second important conceptual reference to think about data extractivism in platform capitalism is the category of “free labour” proposed by Tiziana Terranova (2000; 2004). This concept arises within the tradition of Italian post-workerism. One of the main contributions of this line of contemporary Marxism is its attempt to redefine the category of labour in the light of technical transformations in the sphere of production. In this endeavour, post-workerism explores the category of labour beyond the factory, such as domestic labour, creative labour, or cultural consumption. From this perspective, Tiziana Terranova uses the notion of “free labour” to explain the appropriation of user data in the context of platform capitalism. This concept comes from a series of feminist authors (among them Silvia Federici) who had used it to define domestic labour which, although essential for the reproduction of the labour force later exploited by capital, it is not remunerated, deepening asymmetric gender relationships. Terranova thus establishes an analogy between domestic labour and users’ online activity. In both cases, we are in front of productive labour (human activity that produces surplus value) which is not paid for. With this analogy, Terranova seeks to take further the analysis of Jhally and Livant previously mentioned. Following Antonio Negri (2008), Terranova argues that in contemporary capitalism the process of valorisation of capital goes beyond the walls of the factory and colonizes the entire social realm. For this reason, it is necessary to speak of a “social factory” (Negri 2008), that is, of the subsumption of the totality of social space to the production of surplus value. Data extractivism would be one of the ways in which capital captures this production of surplus value that occurs outside the traditional factory. Data extraction allows not only to personalize an audience for advertising purposes, but also to model the production process itself to increasingly produce “tailor-made” merchandise, thus reducing the cost of production and also shortening the cycles of profit return. While the “toyotist” model of Taiichi Ohno (1988) sought to achieve these objectives through sophisticated market studies that implied the paid labour of experts, in platform capitalism it is the capture and (highly automated) processing of data which offers the necessary knowledge to do so. Data extractivism transforms our online activity (and not only this) into a new form of unpaid productive labour. After having presented these background theoretical frameworks, let’s move on to the three perspectives from which to think about the relationship between machine learning and data extractivism in contemporary capitalism.

First Perspective: Data Rentism

The first perspective to approach data extractivism is Carlo Vercellone’s thesis on the “becoming rent of capital” (2005; 2007). For Vercellone, the process of valorisation of capital in post-industrial capitalism does not occur only inside the factory, but has expanded into the so-called “social factory” (Negri 2008). This entails that it is becoming increasingly difficult to distinguish between labour time and leisure time, which means, in turn, that abstract labour time can no longer operate as a measure of value and, therefore, as a measure of the rate of the exploitation of labour. In other words, post-industrial capitalism challenges the validity of Marx’s labour theory of value (Negri 2008) and thereby demands new conceptual apparatuses to explain capitalist exploitation.

One of Vercellone’s central theses is that contemporary capitalism produces value through the appropriation of the common. Although this common also includes natural resources, for Vercellone it refers mainly to cultural products that circulate in the social factory and that until now would have remained external to capital’s valorisation process. This thesis implies two things. In the first place, in the context of the social factory, capital would no longer organize productive labour (as it did within the industrial factory). In this context, therefore, capital no longer appears as productive (as a source of technical development of the productive forces), but limits itself to the appropriation and commodification of the common in domains no longer organized or directed by it. Second, and as a consequence of the foregoing, Vercellone proposes the thesis of the “becoming rent of capital”. Capitalism would no longer be productive (producing surplus value within the factory) but mainly rentier (appropriating the common and extracting rent from it without producing anything in the process).

In a recent text, Andrea Fumagalli et al. (2018) use Vercellone’s thesis to explain the production of value by digital platforms, particularly in the case of Facebook. For these authors, in the case of this social network

“the process described as becoming rent of capital is evident: Facebook does not obtain profit merely from organizing the paid labour of its relatively few employees, but extracts rent from the common produced by the non-paid labour of its users” (2018 p. 35).

As we can see, Fumagalli et. al. combine Vercellone’s thesis about rentism with Terranova’s analyisis of unpaid labour. We can expand the reading of Fumagalli et. al. in order to understanding the production of value in the case of data extractivism. From the perspective of these authors, data extractivism appears as an important example of the becoming rent of capital and of the new forms of appropriation of the common. Through data capture, digital platforms record user activity, which is then processed and commercialized. Data extractivism would be a mechanism for capturing and appropriating the common, which is then used to generate rent (and no longer profit). This common is registered and then used for the training of machine learning algorithms, which in turn allow automating the production of goods and thus accelerating capital’s valorisation process. Capital would be generating rent from the expropriation of the common through the extraction of data. Furthermore, this process is contributing to a general tendency towards automation, accelerating the expulsion of human labour from the productive sphere.

Second Perspective: Critical Theory of Value

Let us now turn to the second perspective. At the opposite pole to that of Vercellone and the Italian post-workerism are the authors associated with the so-called “critical theory of value” (Kurz 2014; Postone 2016; Jappe 2017). Contrary to the Italian authors, the critical theory of value postulates that “thinking value dissociated from abstract labour time” is a serious conceptual error that hinders a proper understanding of contemporary capitalism. Anselm Jappe (2017 p. 59), for example, argues that, despite all the technological transformations experienced in recent decades, the social relations of our time are still determined by the (historically specific) categories of labour and value. From this point of view, he adds, “we find ourselves in practically the same situation as in the 19th century, when Marx developed his critique of capitalism” (Jappe 2017 p. 59). The expansion of capitalism has not thus implied a crisis of the labour theory of value, but, on the contrary, its scope has extended “to ever wider spheres of human life” (Jappe 2017 p. 59).

This approach requires a very specific definition of value as a “social relation measured in abstract human labour time”. From this perspective, therefore, only living labour, reduced to chronological time, is a source of value. For their part, machines (including machine learning algorithms) do not produce value. They can only transmit to the product the value already contained in themselves (abstract labour time necessary for its manufacture). Thanks to technical development, motivated by competition between producers, each capitalist “makes an additional profit for himself, but contributes to reducing the mass of global value, and then to undermining the capitalist system as such” (Jappe 2017 p 61).

From this perspective, data extractivism should not be considered a source of value. On the contrary, the link between data extraction and machine learning accounts for a more general program of automation that seeks to gradually expel living labour from the productive sphere (which diminishes the global mass of value).

This argument stands as a criticism of the theory of rentism previously described. As George Caffentzis (2013 p. 121) argues, the rentism thesis defended by the theorists of “cognitive capitalism” forgets that the rent produced by the appropriation of the common is nothing more than the transmission of value from other less developed spheres of production where the rate of living labour remains high. Rentism does not produce value, it only captures a value produced in other sectors of production in which the organic composition of value is still low. To the extent that these sectors disappear, there will be less and less global mass of value to transmit, revealing the fiction on which the thesis of “becoming rent of capital” stands.

Within this perspective, it is relevant to note the work developed by Ramin Ramtin (1991). Ramtin argues that a proper Marxist analysis of technology requires a distinction between mechanisation and automation. Following Marx’s (2004) notion of machine (composed of motor, transmission and tool), Ramtin argues that mechanisation is the mechanical replacement of manual labour in which a machine occupies the place of the human worker. This process, however, still requires the “control function” from a human operator/supervisor. Automation, instead, is linked to the discovery by cybernetics of the notion of feedback, that is, the ability of a machine to automatically activate or deactivate its own functioning. This implies a definition of the machine that adds a fourth element to the three already identified by Marx: the information that regulates its operation. In the context analysed by Babbage and Marx, this fourth element still belonged to the domain of human labour. For this reason, Marx (2006; 1973) postulates that in large industry human labour is reduced to the role of “watchman”. With the development of cybernetics and the notion of information (feedback), however, this fourth element of the machine becomes capable of being automated, completely expelling living labour from the production process (even the labour that had been reduced to the role of supervisor). With this, Ramtin proposes (1991 p. 20), capitalism would have signed its own “death certificate”, completely eliminating the residues of living labour that were still part of the process of commodity production, and thus accelerating the fall of the rate of profit. From Ramtin’s point of view, data extractivism and machine learning would be the most recent version of a long history of automation triggered by the basic contradiction of capital (reducing human labour to a minimum while keeping human labour as the measure and unit of value).

It is useful to mention here the thesis of Ekbia and Nardi (2017) according to which current automation processes are built on a gigantic amount of precarious human labour, many times unpaid or unrecognized, invisible. The authors use the category of “heteromation” to define this relationship between highly complex automation processes and the human labour tasks that make this automation possible (which are mostly invisible and developed in the most precarious regions of global capitalism).

As we will argue in the third and final perspective, however, the notions of automation and heteromation can be complemented with that of “immaterial labour” (Lazzarato 1996) to offer an alternative to the “critical theory of value”. Before that, however, we offer a brief excursus that conceives the automation process from a more optimistic and technophilic perspective.

Fully-Automated Luxury Communism

It is interesting to note the position of Aaron Bastani (2018) and Alex Williams and Nick Srnicek (2015). Coming from the new wave of “English Marxist Accelerationism”, these authors do not conceive of data extractivism as something negative per se. To support their argument, the authors focus on the distinction made by Marx in the Grundrisse (1973) between “wealth” and “value”. The first refers to the development of the productive forces of a society, while the second refers to a particular type of social relationship founded on abstract labour time. The central contradiction of capitalism would then be that of, on the one hand, developing the productive forces in an increasingly autonomous way with respect to human labour time (thus generating wealth), but, on the other, continuing to organize society around human labour time as a measure of social relations (managing that wealth based on the value form). In the particular case of “platform capitalism”, data extraction is put at the service of reproducing “value” and not necessarily of the production of “wealth”. Data extraction is used to accelerate the production and exchange of commodities (measured in terms of abstract labour) and not to manage the productive forces beyond the value form. For these authors, however, post-capitalist society will be one that can use all the productive forces created by capital (wealth), but stripped of the value form as the measure of social relations. The authors thus define a post-capitalist utopia in which the productive forces are at the service of human time (and not as in capitalism in which human labour time is at the service of capital). For this task, data extractivism can be a fundamental tool when it comes to managing society and the redistribution of production.

Aaron Bastani, in particular, forges the notion of “fully-automated luxury communism” to define this post-capitalist utopian society. Data extractivism, used today to reproduce the value form in a regime of increasing wealth, appears in these authors’ project as the key to managing wealth beyond the value form. Data extractivism would thus function as a kind of Cybersyn Project in a world where production has been completely automated. Unlike the two previous theoretical perspectives, the position of these authors demands that data extraction be dissociated from the value form: the post-capitalist utopian society will maintain the productive capacity of platform capitalism, but replacing the value form for novel forms of redestribution of wealth.

Third Perspective: Towards and Informational Theory of Value

The third and final perspective summarizes an ongoing series of hypotheses about the role of information in the valorisation cycle of capital. As a starting point we use two contemporary references: the redefinition of the self-valorisation cycle of “computational capitalism” developed by Jonathan Beller (2018) and the provocative thesis of a post-capitalist society proposed by McKenzie Wark (2019).

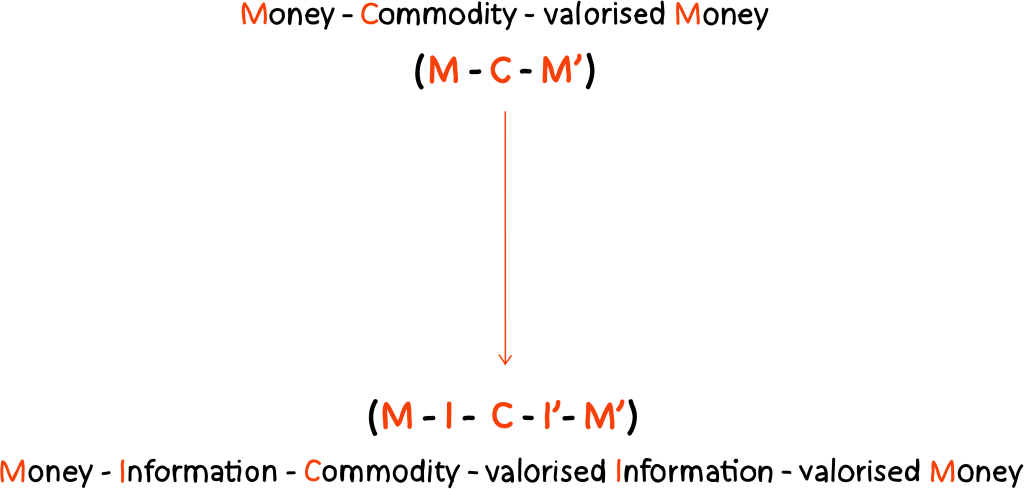

Beller (2018 p. 162) tries to overcome the discussions about the validity or obsolescence of the labour theory of value in Marx by rewriting the formula Money – Commodity – Valorised money (M-C-M’) as M-I-C-I’-M’. For Beller this “I” refers to the notion of image, thus continuing his thesis on the attention economy and the society of the spectacle (2006). Wark, for his part, risks the thesis according to which a large portion of contemporary societies is inhabiting a post-capitalist mode of production in which the central opposition is no longer that between proletarians and capitalists (between labour and capital) but between “hackers” and “vectorialists”, that is, between those who produce information and those who capture it, exploit it and control its application (2019 p. 43). The thesis that we are trying to develop continues what is proposed by Wark. From there, we reread Beller’s thesis (2018), but we understand the “I” not as an image, but as information. Contemporary capitalism thus appears to us as a mode of production characterized by the following cycle: Money – Information – Commodity – Valorised information – Valorised money.

To a large extent, this thesis would continue the now classic formulation of Maurizio Lazzarato (1996) about “immaterial labour”. For him, immaterial labour refers to human activity that generates the information necessary for the production of a commodity (and therefore should not be confused with the category of abstract labour). In contemporary capitalism this implies that many human activities not traditionally considered as labour begin to be treated as a source of value. One of our owns contributions is to understand this category of information through the prism of Gilbert Simondon (2016).

Unlike the theory of communication (Jakobson) and the mathematical theory of information (Shannon), Simondon (2016) defines information not as an internal characteristic of a message, but based on its ability to activate energy transformation processes:

“To be or not to be information does not depend only on the internal characteristics of a structure; information is not a thing, but the operation of a thing that reaches a system and produces a transformation there. Information cannot be defined beyond that act of transforming incidence and the reception operation. It is not the sender who determines a structure as information”

Gilbert Simondon( 2016, p. 139)

The information function, therefore, is the modification of a local reality (a change of energy state in a metastable system) from an incident signal (Simondon 2016 p. 140). Likewise, a receiver of information is virtually “every reality that does not entirely possess by itself the determination of the course of its becoming” (Simondon 2016 p. 140). In other words, every metastable system is a potential receiver of information, that is, a receiver of an incident signal that triggers a state transformation. For its part, any incident signal that triggers an energy transformation will be considered information.

The third theoretical perspective proposed here, then, would seek to: a) include information (in its Simondonian sense) as a central element of capital’s valorisation cycle; b) identify the accumulation processes of that information; and c) historicize the link between information and living labour in the different phases of capitalism. Let’s briefly review this last point, establishing four moments in the relationship between information and labour:

-

In artisan labour (formal subsumption), the information necessary to produce a commodity is presented as an essential and inseparable part of living labour. Furthermore, each time the artisan produces a new merchandise, a small learning process takes place in which the information contained in living labour is amplified. This amplification of the information involved in the labour process remains part of living labour, and can only be expropriated by capital through absolute surplus value.

-

In industrial labour (real subsumption), on the other hand, the information that previously belonged to the craftsman is objectified in the machinery, reducing living labour to a manual repetition stripped of its informative dimension. In the industrial mode of production, capitalism has appropriated the information that previously belonged to living labour, thus accelerating the production process and generating relative surplus value. The problem with industrial capitalism, however, is its difficulty to modify the production process in the light of new information. That is, the information enters the production of commodities, but is not amplified in each new cycle (nor can it be reabsorbed in a simple manner by the mechanised production line).

-

Post-industrial labour arises in response to the rigidity of industrial labour. Its objective is to make the production process more flexible. For this, post-industrial capitalism (cognitive) re-incorporates in living labour (variable capital) the informational dimension that was previously objectified in fixed capital. This allows a permanent actualisation of the production process, thus adjusting to highly volatile capital flows. Unlike artisan labour, however, the amplification of information in each productive cycle is not restricted to the human worker, but is captured by capital through new information technologies. Living labour introduces information and flexibility to the production process which is then captured by information technologies.

What about the relationship between information and living labour in the context of data extractivism? From the perspective of an informational theory of value, it could be suggested that data extractivism functions as a capture apparatus that seeks to accelerate the separation between information and living labour typical of industrial capitalism, while maintaining the flexibility of post-industrial capitalism. Machine learning algorithms makes it possible to combine radical automation of production with flexibility, which allows adapting that automation to the speed and volatility of contemporary capitalism (a flexibility that in previous technical eras remained an exclusive feat of living labour). Furthermore, we could argue that the link between living labour and information in the context of data extractivism and machine learning technologies exemplifies the link suggested by Gilles Deleuze (1995a; 1995b) between information technologies, control societies, and post-industrial capitalism.

Translated from Spanish by Catalina Büchner

References

Alquati, R. (1962). Composizione organica del capitale e forza-lavoro alla Olivetti. Quaderni Rossi, 2, 63-98.

Bastani, A. (2019). Fully Automated Luxury Communism: A Manifesto. London: Verso.

Beller, J. (2006). The Cinematic Mode of Production: Attention Economy and the Society of the Spectacle. New Hampshire: University Press of New England.

Beller, J. (2018). The Message is Murder: Substrates of Computational Capital. London: Pluto Press.

Caffentzis, G. (2013). In Letters of Blood and Fire: Work, Machines, and Value in the Bad Infinity of Capitalism. New York: PM Press.

Celis Bueno, C. (2017) The Attention Economy: Labour, Time and Power in Cognitive Capitalism. London: Rowman & Littlefield.

Celis, C. y Schultz, M. J. (2020). Memo Akten’s Learning to See: from machine vision to the machinic unconscious. AI & Society, 35(4), 1-11. https://link.springer.com/article/10.1007/s00146-020-01071-2

Crawford, K. (2021). Atlas of AI. New Haven: Yale University Press.

Davenport, T. H., & Beck, J. C. (2001). The Attention Economy: Understanding the New Currency of Business. Cambridge: Harvard Business School.

Deleuze, G. (1995a). ‘Control y devenir’, en Conversaciones. Valencia: Pre-Textos. Pp. 265-276.

Deleuze, G. (1995b). ‘Post-scriptum sobre las sociedades de control’, en Conversaciones. Valencia: Pre-Textos. Pp. 277-282.

Dyer-Witheford, N., Mikkola Kjosen, A., & Steinhoff, J. (2019). Inhuman Power. London: Pluto Press.

Ekbia, H., & Nardi, B. (2017). Heteromation, and Other Stories of Computing and Capitalism. Cambridge, MA: The MIT Press.

Fuchs, C. (2015). Dallas Smythe Today–The audience commodity, the digital labour debate, Marxist political economy and critical theory. Prolegomena to a digital labour theory of value. In C. Fuchs (Ed.), Marx and the Political Economy of the Media (pp. 522-599). Leiden: Brill.

Fumagalli, A., Lucarelli, S., Musolino, E., & Rocchi, G. (2018). El trabajo (labour) digital en la economía de plataforma: el caso de Facebook. Hipertextos, 6(9), 12-40. http://revistahipertextos.org/wp-content/uploads/2015/12/1.-Fumagalli-et-al.pdf

Gago, V. y Mezzadra, S. (2017). A Critique of the Extractive Operations of Capital: Toward an Expanded Concept of Extractivism. Rethinking Marxism, 29:4, 574-591. https://doi.org/10.1080/08935696.2017.1417087

Jappe, A. (2017). Trayectorias del capitalismo: del sujeto autómata a la automatización de la producción. In A. Vera & S. Navarro (Eds.), Bifurcaciones de lo sensible (pp. 59-67). Santiago: RIL Editores.

Jhally, S., & Livant, B. (1986). Watching as working: The valorization of audience consciousness. Journal of communication, 36(3), 124-143. https://onlinelibrary.wiley.com/doi/10.1111/j.1460-2466.1986.tb01442.x

Kurz, R. (2014). The Crisis of Exchange Value: Science as Productive Force, Productive Labour, and Capitalist Reproduction. In N. Larsen, M. Nilges, N. Brown, & J. Robinson (Eds.), Marxism and the Critique of Value (pp. 17-76). Chicago: MCM’ Publishing.

Lazzarato, M. (1996). Immaterial Labour. In P. Virno & M. Hardt (Eds.), Radical Thought in Italy: A Potential Politics. Minnesota: University of Minnesota Press.

Lee, M. (2011). Google ads and the blindspot debate. Media, Culture & Society, 33(3), 433-447.

Marx, K. (1973). Grundrisse: Foundations of the Critique of Political Economy (M. Nicolaus, Trans.). New York, London: Random House; Penguin.

Marx, K. (2004). Miseria de la filosofía. Madrid: Edaf.

Marx, K. (2006). El Capital: crítica de la economía política (Vol. 1). México: Fondo de Cultura Económica.

Massumi, B. (2018). 99 Theses on the Revaluation of Value: A Postcapitalist Manifesto. Minneapolis: University of Minnesota Press.

Negri, A. (2008). Reflections on Empire. Cambridge: Polity Press.

Ohno, T. (1988). Toyota Production System: beyond large-scale production. Cambridge, MA: Productivity Press.

Ramtin, R. (1991). Capitalism and Automation. London: Pluto Press.

Simondon, G. (2007). El modo de existencia de los objetos técnicos. Buenos Aires: Prometeo Libros.

Simondon, G. (2016). Comunicación e Información. Buenos Aires: Editorial Cactus.

Smythe, D. W. (1977). Communications: blindspot of western Marxism. Canadian Journal of Political and Social Theory, 1(3), 1-28. https://journals.uvic.ca/index.php/ctheory/article/view/13715

Srnicek, N. (2018). Capitalismo de Plataformas. Buenos Aires: Caja Negra.

Srnicek, N., & Williams, A. (2015). Inventing the Future. London: Verso.

Terranova, T. (2000). Free Labor: Producing Culture for the Digital Economy. Social Text, 18(2), 35-58.

Terranova, T. (2004). Network culture: politics for the information age. London: Pluto Press.

The Economist (2017). The world’s most valuable resource is no longer oil, but data. Retrieved from https://www.economist.com/leaders/2017/05/06/the-worlds-most-valuable-resource-is-no-longer-oil-but-data

Vercellone, C. (2005). The Hypothesis of Cognitive Capitalism. https://halshs.archives-ouvertes.fr/halshs-00273641

Vercellone, C. (2007). From formal subsumption to general intellect: Elements for a Marxist reading of the thesis of cognitive capitalism. Historical Materialism, 15(1), 13-36.

Wark, M. (2019). Capital is Dead: Is this Something Worse? London: Verso.

Zuboff, S. (2020). La era del capitalismo de la vigilancia. Barcelona: Paidos Ibérica.